Why Multiple Powerhouse Models Are Reshaping High-Performance Computing

Multiple powerhouse models are transforming how organizations handle demanding AI and data workloads — here is a quick overview of the key systems covered in this roundup:

| Model / Hardware | Type | Key Strength |

|---|---|---|

| NVIDIA H200 SXM | GPU | Horizontal scaling, cost efficiency |

| NVIDIA H200 NVL | Dual-GPU unit | 282GB unified memory, memory-bound tasks |

| Mistral Large 3 | AI model (675B params) | Sparse MoE, extreme token throughput |

| Nemotron 3 Nano 30B-A3B | AI model (31.6B params) | 3.3x faster inference, 1M token context |

| Qwen3.5-9B | AI model | Multimodal, runs on consumer hardware |

The demand for faster, smarter, and more scalable computing has never been higher. Whether you are training a 675-billion-parameter AI model or processing millions of tokens per second, the hardware and models you choose make a massive difference.

The gap between capable and truly high-performance systems is enormous — and it comes down to how well your models and hardware work together.

This roundup breaks down the leading systems driving that performance, so you can make an informed decision about what fits your needs.

I’m Bill French, Sr., Founder and CEO of FDE Hydro™, where I’ve spent decades applying modular innovation to complex infrastructure challenges — including multiple powerhouse models in the hydropower space. That same principle of doing more with smarter, scalable systems is exactly what drives this comparison of today’s leading high-performance computing architectures.

Multiple powerhouse models word list:

The Hardware Foundation: NVIDIA H200 SXM vs. H200 NVL

When we talk about Multiple powerhouse models in the hardware world, the conversation inevitably leads to NVIDIA’s Hopper architecture. Specifically, the choice between the H200 SXM and the H200 NVL determines how effectively an organization can scale its intelligence.

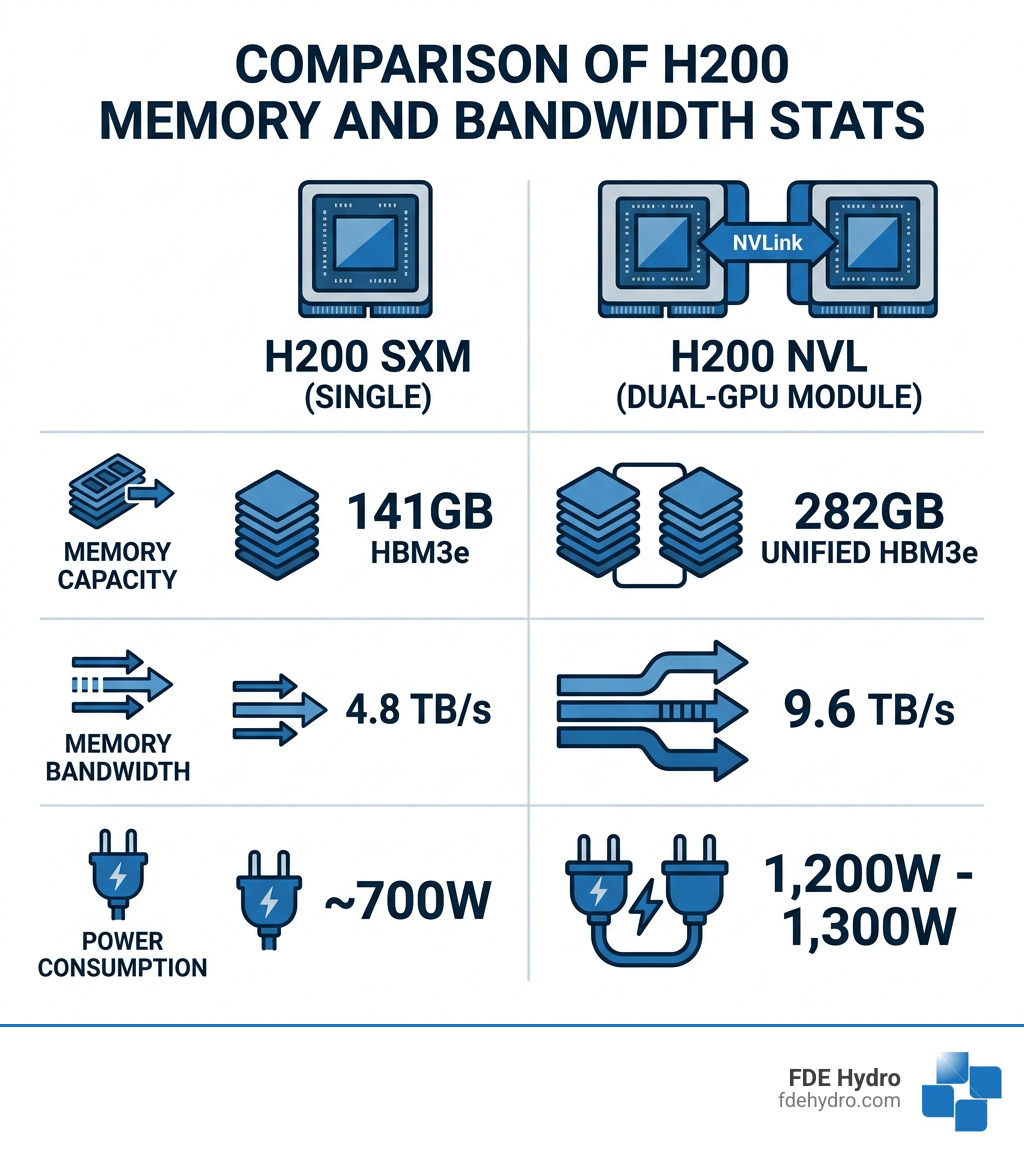

The H200 SXM is the workhorse of the modern data center. It is a single GPU module designed for horizontal scalability. In a standard HGX server, these GPUs work in parallel, each bringing its own 141GB of HBM3e memory to the table. It is the go-to choice for projects requiring flexible scaling and compatibility with standard infrastructure in places like New York or California.

On the other hand, the H200 NVL is a design that pairs two H200 GPUs into a single logical unit. By using a high-speed NVLink bridge, it merges the memory of both cards into a massive 282GB unified pool. This is critical for “hitting the memory wall”—those moments when an AI model is simply too large to fit into a single card’s VRAM without complex and slow splitting.

| Feature | H200 SXM (Single) | H200 NVL (Dual-GPU Module) |

|---|---|---|

| Memory Capacity | 141GB HBM3e | 282GB Unified HBM3e |

| Memory Bandwidth | 4.8 TB/s | 9.6 TB/s |

| Power Consumption | ~700W | 1,200W – 1,300W |

| Best Use Case | Horizontal scaling, CFD | Massive LLMs (70B+ parameters) |

If your organization is tackling real-time analytics or massive graph neural networks, the NVL’s 9.6 TB/s bandwidth is a game-changer. However, if you are looking for the best performance-per-dollar for compute-heavy tasks like financial modeling, the SXM remains the efficiency king. You can Explore NVIDIA’s GPU lineup for high-performance systems to see which fits your specific rack configuration.

Scaling Intelligence with Multiple Powerhouse Models

Hardware is only half the story. The software models running on these chips have evolved into Multiple powerhouse models that utilize “Sparse Mixture of Experts” (MoE) architectures to achieve what was recently thought impossible.

Mistral Large 3 is a prime example. With a staggering 675 billion total parameters, it only activates 41 billion parameters during any single forward pass. This “sparse” approach allows it to deliver industry-leading accuracy without the astronomical energy costs of a fully dense model. When deployed on the NVIDIA GB200 NVL72—a rack-scale system—it achieves up to 10x higher performance than previous generations.

We’ve seen stats showing Mistral Large 3 exceeding 5 million tokens per second per megawatt. For enterprises in North America or Europe looking to deploy production-ready AI, this level of efficiency is the difference between a viable project and a budget-breaker. You can Access Mistral AI on Hugging Face to explore these checkpoints, or read more about NVIDIA-Accelerated Mistral 3 performance benchmarks to see how they stack up in real-world interactivity tests.

Architectural Innovations in Multiple Powerhouse Models

While Mistral handles the massive enterprise tasks, Nemotron 3 Nano (specifically the 30B-A3B version) is redefining what we expect from “small” models. It uses a hybrid Mamba-Transformer architecture. This is a bit like our “French Dam” technology—it takes the best parts of existing systems and combines them into something more efficient.

The Mamba architecture handles long sequences with incredible speed, while the Transformer elements ensure high-quality reasoning. This hybrid approach allows Nemotron 3 Nano to activate only 3.2 billion parameters per pass out of its 31.6 billion total. The result? It provides 3.3x faster inference than similarly sized models like Qwen3-30B.

This model isn’t just fast; it’s smart. It was pretrained on 25 trillion tokens, including 2.5 trillion new English tokens from the Nemotron-CC-v2.1 dataset insights collection. For those interested in the deep technical weeds, the Research on Nemotron 3 Nano architecture and efficiency explains how it maintains accuracy even when quantized to FP8.

Performance Benchmarks for Multiple Powerhouse Models

Another entry in the Multiple powerhouse models hall of fame is the Qwen3.5-9B. Don’t let the “9B” fool you; this model punches way above its weight class. It utilizes “Gated DeltaNet” linear attention, which allows it to support a context length of up to 1 million tokens while maintaining constant memory complexity.

In testing, Qwen3.5-9B has been shown to beat models three times its size on benchmarks like GPQA and IFEval. It is natively multimodal, meaning it processes text, images, and video from the same set of weights. This is a massive leap forward for accessibility, as this model can run on consumer-grade hardware like an RTX 4090 or even a Mac with 12GB of RAM. You can find more data on this in the Qwen2 Technical Report and benchmark data, which highlights its reasoning and coding prowess.

Deployment Considerations for High-Performance Systems

At FDE Hydro™, we deal with massive power requirements every day. Deploying Multiple powerhouse models in a data center isn’t much different from managing a hydroelectric facility—you have to account for power, cooling, and structural integrity.

An H200 NVL module can pull up to 1,300 watts. If you have a rack full of these, you are looking at power demands that exceed 2,000W per module once you account for the rest of the system. This level of heat cannot be managed by traditional air cooling. You need advanced liquid cooling solutions, such as direct-contact cold plates, which offer 2-3x better heat transfer than standard methods.

Organizations must also consider the “Return on Investment” (ROI). The H200 NVL typically costs about 40% more than two H200 SXM GPUs. Is the 282GB unified memory worth the premium? If your model is 70B parameters or larger, the answer is usually “yes,” because it simplifies your code and removes the latency of moving data between separate cards. If you’re building a modular infrastructure, you might want to Learn more about modular powerhouses to see how we approach scalability in high-stakes environments.

Frequently Asked Questions about Multi-Model Systems

What are the fundamental differences between NVIDIA H200 SXM and H200 NVL?

The H200 SXM is a single GPU designed for horizontal scaling in standard HGX servers. The H200 NVL is a dual-GPU module that uses an NVLink bridge to create a 282GB unified memory pool. Essentially, SXM is for “scaling out” (adding more units), while NVL is for “scaling up” (handling bigger individual tasks).

How does Nemotron 3 Nano achieve superior inference throughput?

Nemotron 3 Nano uses a hybrid Mamba-Transformer Mixture-of-Experts (MoE) design. By only activating a fraction of its total parameters (3.2B out of 31.6B) during a forward pass, it reduces the computational load significantly. This allows it to run up to 3.3x faster than traditional dense models of the same size.

Which workloads are best suited for H200 NVL versus SXM?

Choose H200 NVL for memory-bound tasks like massive LLMs (70B+ parameters), real-time fraud detection, or large-scale graph neural networks. Choose H200 SXM for compute-focused tasks that can be easily parallelized, such as computational fluid dynamics (CFD), financial modeling, or training mid-sized AI models.

Conclusion

Whether you are building a data center to run Multiple powerhouse models or constructing a modular dam to power a city, the principles of efficiency and scalability remain the same. At FDE Hydro™, our patented “French Dam” technology mirrors the modularity seen in the NVIDIA HGX architecture. We believe that by breaking complex systems down into smarter, precast concrete modules, we can reduce construction time and costs across North America, Brazil, and Europe.

Just as the H200 NVL unifies memory to solve the “memory wall,” our modular systems unify structural integrity and rapid deployment to solve the “infrastructure wall.” If you’re looking to upgrade your water control systems or start a new renewable energy project, Explore FDE Hydro’s modular dam solutions and see how we’re bringing powerhouse performance to hydropower.